Number of Semantic Web Tools Passes 1000 for First Time; Many Other Changes

Number of Semantic Web Tools Passes 1000 for First Time; Many Other Changes

We have been maintaining Sweet Tools, AI3‘s listing of semantic Web and -related tools, for a bit over five years now. Though we had switched to a structWSF-based framework that allows us to update it on a more regular, incremental schedule [1], like all databases, the listing needs to be reviewed and cleaned up on a periodic basis. We have just completed the most recent cleaning and update. We are also now committing to do so on an annual basis.

Thus, this is the inaugural ‘State of Tooling for Semantic Technologies‘ report, and, boy, is it a humdinger. There have been more changes — and more important changes — in this past year than in all four previous years combined. I think it fair to say that semantic technology tooling is now reaching a mature state, the trends of which likely point to future changes as well.

In this past year more tools have been added, more tools have been dropped (or abandoned), and more tools have taken on a professional, sophisticated nature. Further, for the first time, the number of semantic technology and -related tools has passed 1000. This is remarkable, given that more tools have been abandoned or retired than ever before.

We first present our key findings and then overall statistics. We conclude with a discussion of observed trends and implications for the near term.

Key Findings

Some of the key findings from the 2011 State of Tooling for Semantic Technologies are:

- As of the date of this article, there are 1010 tools in the Sweet Tools listing, the first it has passed 1000 total tools

- A total of 158 new tools have been added to the listing in the last six months, an increase of 17%

- 75 tools have been abandoned or retired, the most removed at any period over the past five years

- A further 6%, or 55 tools, have been updated since the last listing

- Though open source has always been an important component of the listing, it now constitutes more than 80% of all listings; with dual licenses, open source availability is about 83%. Online systems contribute another 9%

- Key application areas for growth have been in SPARQL, ontology-related areas and linked data

- Java continues to dominate as the most important language.

Many of these points are elaborated below.

The Statistical Picture

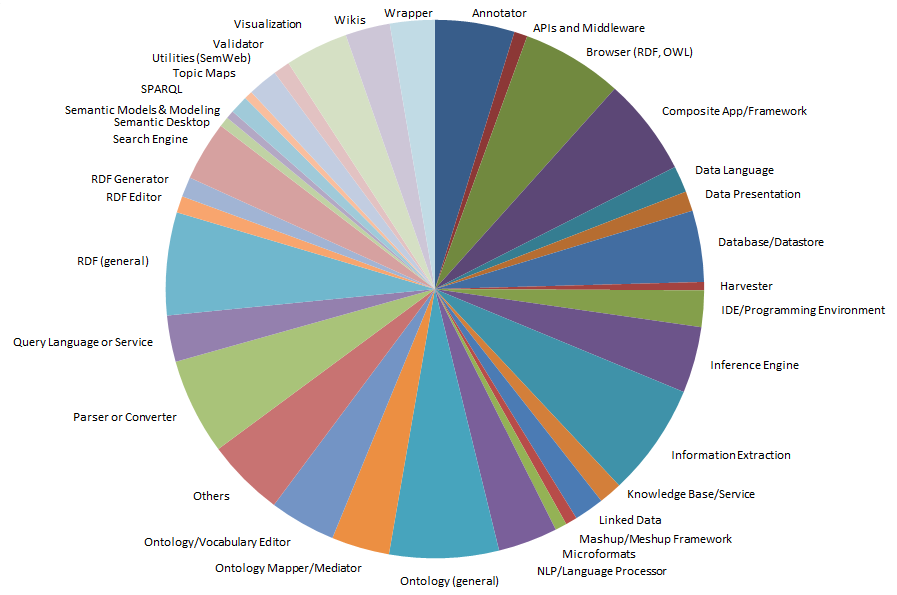

The updated Sweet Tools listing now includes nearly 50 different tools categories. The most prevalent categories, each with over 6% of the total, are information extraction, general RDF tools, ontology tools, browser tools (RDF, OWL), and parsers or converters. The relative share by category is shown in this diagram (click to expand):

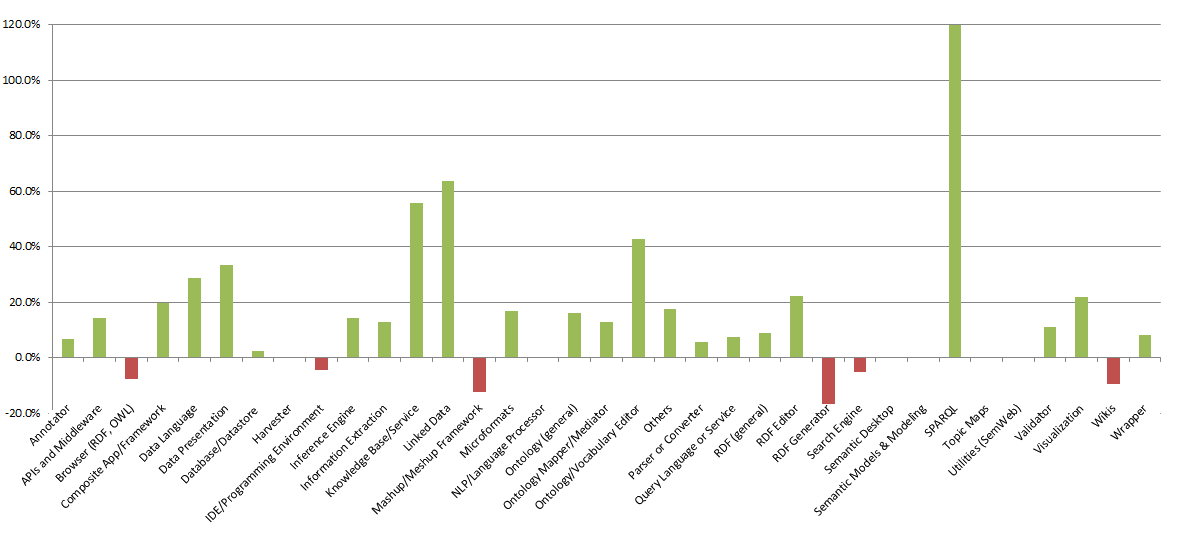

Since the last listing, the fastest growing categories have been SPARQL, linked data, knowledge bases and all things related to ontologies. The relative changes by tools category are shown in this figure:

Though it is true that some of this growth is the result of discovery, based on our own tool needs and investigations, we have also been monitoring this space for some time and serendipity is not a compelling explanation alone. Rather, I think that we are seeing both an increase in practical tools (such as for querying), plus the trends of linked data growth matched with greater sophistication in areas such as ontologies and the OWL language.

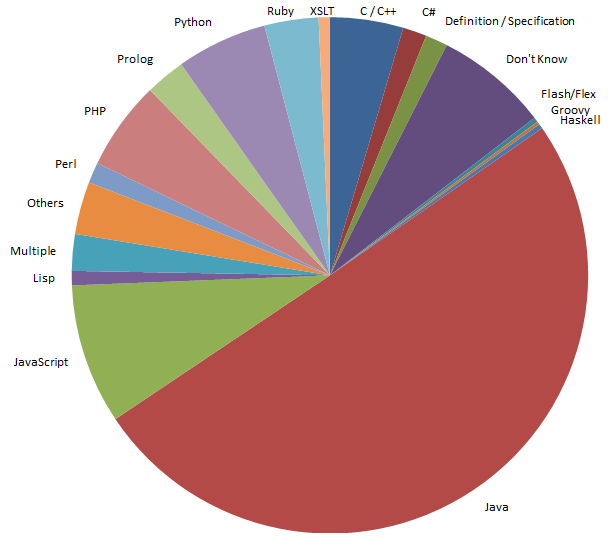

The languages these tools are written in have also been pretty constant over the past couple of years, with Java remaining dominant. Java has represented half of all tools in this space, which continues with the most recent tools as well (see below). More than a dozen programming or scripting languages have at least some share of the semantic tooling space (click to expand):

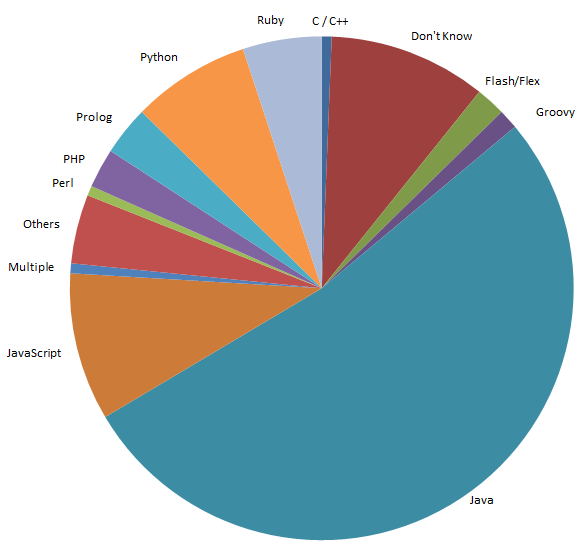

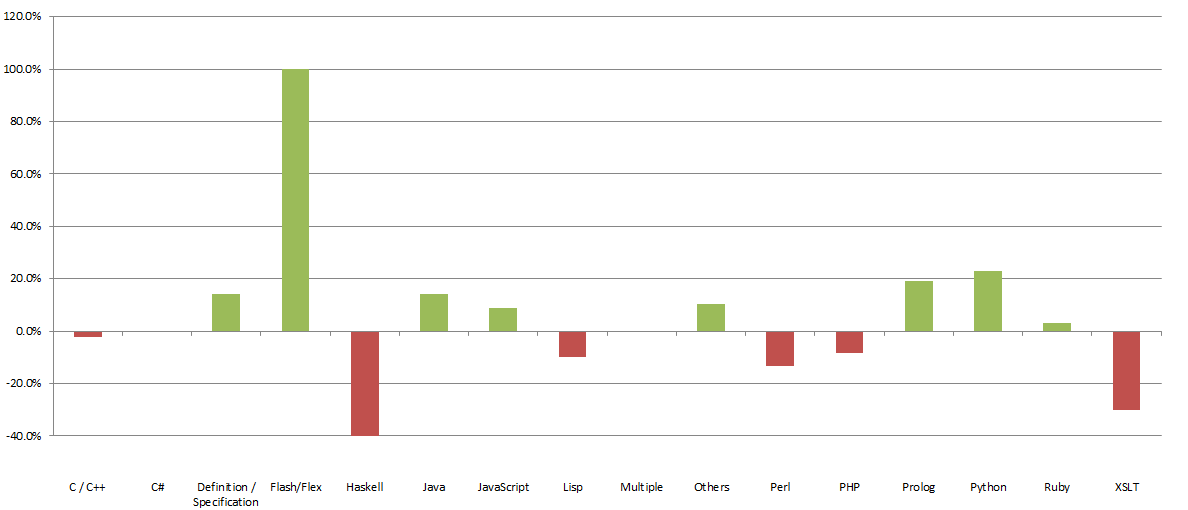

With only 160 new tools it is hard to draw firm trends, but it does appear that some languages (Haskell, XSLT) have fallen out of favor, while popularity has grown for Flash/Flex (from a small base), Python and Prolog (with the growth of logic tools):

PHP will likely continue to see some emphasis because of relations to many content management systems (WordPress, Drupal, etc.), though both Python and Ruby seem to be taking some market share in that area.

New Tools

The newest tools added to the listing show somewhat similar trends. Again, Java is the dominant language, but with much increased use of JavaScript and Python and Prolog:

The higher incidence of Prolog is likely due to the parallel increase in reasoners and inference engines associated with ontology (OWL) tools.

The increase in comprehensive tool suites and use of Eclipse as a development environment would appear to secure Java’s dominance for some time to come.

Trends and Observations

These dry statistics tend to mask the feel one gets when looking at most of the individual tools across the board. Older academic and government-funded project tools are finally getting cleaned out and abandoned. Those tools that remain have tended to get some version upgrades and improved Web sites to accompany them.

The general feel one gets with regard to semantic technology tooling at the close of 2011 has these noticeable trends:

- A three-tiered environment – the tools seem to segregate into: 1) a bottom tier of tools (largely) developed by individuals or small groups, now most often found on Google Code or Github; 2) a middle-tier of (largely) government-funded projects, sometimes with multiple developers, often older, but with no apparent driving force for ongoing improvements or commercialization; and 3) a top-tier of more professional and (often) commercially-oriented tools. The latter category is the most noticeable with respect to growth and impact

- Professionalism – the tools in the apparent top tiers feel to have more professionalism and better (and more attractive) packaging. This professionalism is especially true for the frameworks and composite applications. But, it also applies to many of the EU-funded projects from Europe, which has always been a huge source of new tool developments

- More complete toolsets – similarly, the upper levels of tools are oriented to pragmatic problems and problem-solving, which often means they embody multiple functions and more complete tooling environments. This category actually appears to be the most visible one exhibiting growth

- Changing nature of academic releases – yet, even the academic releases seem to be increasing in professionalism and completeness. Though in the lowest tier it is still possible to see cursory or experimental tool releases, newer academic releases (often) seem to be more strategically oriented and parts of broader programmatic emphases. Programs like AKSW from the University of Leipzig or the Freie Universität Berlin or Finland’s Semantic Computing Research Group (SeCo), among many others, tend to be exemplars of this trend

- Rise of commercial interests and enterprise adoption – the growing maturity of semantic technologies is also drawing commercial interest, and the incubation of new start-ups by academic and research institutions acts to reinforce the above trends. Promising projects and tools are now much more likely to be spun off as potential ventures, with accompanying better packaging, documentation and business models

- Multiple languages and applications – with this growing complexity and sophistication has also come more complicated apps, combining multiple languages and functions. In fact, for some time the Sweet Tools listing has been justifiably criticized by some as overly “simplifying” the space by classifying tools under (largely) single applications or single languages. By the 2012 survey, it will likely be necessary to better classify the tools using multiple assignments

- Google code over SourceForge for open source (and an increase in Github, as well) – virtually all projects on SourceForge now feel abandoned or less active. The largest source of open source projects in the semantic technology space is now clearly Google Code. Though of a smaller footprint today, we are also seeing many of the newer open source projects also gravitate to Github. Open source hosting environments are clearly in flux.

I have said this before, and been wrong about it before, but it is hard to see the tooling growth curve continue at its current slope into the future. I think we will see many individual tools spring up on the open source hosting sites like Google and Github, perhaps at relatively the same steady release rate. But, old projects I think will increasingly be abandoned and older projects will not tend to remain available for as long a time. While a relatively few established open source standards, like Solr and Jena, will be the exception, I think we will see shorter shelf lives for most open source tools moving forward. This will lead to a younger tools base than was the case five or more years ago.

I also think we will continue to see the dominance of open source. Proprietary software has increasingly been challenged in the enterprise space. And, especially in semantic technologies, we tend to see many open source tools that are as capable as proprietary ones, and generally more dynamic as well. The emphasis on open data in this environment also tends to favor open source.

Yet, despite the professionalism, sophistication and complexity trends, I do not yet see massive consolidation in the semantic technology space. While we are seeing a rapid maturation of tooling, I don’t think we have yet seen a similar maturation in revenue and business models. While notable semantic technology start-ups like Powerset and Siri have been acquired and are clear successes, these wins still remain much in the minority.

Hi Mike,

I’m not sure what process you use to discover tools but I wanted to point you to our new tool.. Butterflyzer. We’re calling it a “Content Intelligence Environment” and I think it’s pretty unique. And while it’s primarily web-orietented for now, it could easily be adapted to other semantic technologies.

http://butterflyzer.com/

There is an open Beta Preview available now and we could really use feedback.

cheers,

Miles

Hi Mike,

Thanks for your insight about the state-of-art in various semantic tools. – this is indeed very interesting!

Would you be happy to look at other semantic tools provided by Veda. We would be glad to bring to your attention other tools from our offering that include Veda Ontology Editor, Veda Ontology Reasoner and Veda Rule Engine.

We were very happy to sponsor the recent Semantic Tech & Biz event at Washington DC as well. You may also visit our website http://veda.emudhra.com for a more comprehensive list of other semantic and text mining tools provided by Veda.

Should you have any clarifications, do not hesitate to reach out to us on the website.

Cheers

-Venky

Dear Mike,

I would be very glad if you could include RDFSharp (http://rdfsharp.codeplex.com/) in your listing of tools. It is a very new open source library for RDF management and it is written in .NET (C# language).

Thanks a lot,

Marco