Writing and Sharing Data Can be Lightened Up

Ever since I first started to learn in earnest about ontology, something has been gnawing at me. The term seemed to be (shall I say?) an obtuse one whose obscurity was not the result of subtle precision or technicality, but rather one of fuzziness. As I introduced my Intrepid Guide to Ontology two years ago, I noted:

Since then, I have continued to find ontology one of the hardest concepts to communicate to clients and quite a muddled mess even as used by practitioners. I have come to the conclusion that this problem is not because I have failed to grasp some ephemeral nuance, but because the term as used in practice is indeed fuzzy and imprecise.

Since then, I have continued to find ontology one of the hardest concepts to communicate to clients and quite a muddled mess even as used by practitioners. I have come to the conclusion that this problem is not because I have failed to grasp some ephemeral nuance, but because the term as used in practice is indeed fuzzy and imprecise.

What Isn’t an Ontology?

Even two years ago, I noted more than 40 different types of information structure that have at one time or another been labelled as an example of an “ontology”:

Since then, I could add even more terms to this list.

Lack of precision as to what ontology means has meant that it has been sloppily defined. As I have harped upon many times regarding semantic Web terminology, this is a sad state of affairs for the semWeb endeavor that has meaning at the core of its purpose.

I’m pretty sure that the original intent in embracing the concept of ontology within the realm of knowledge representation was not to see this term so broadly misused or mis-applied. I suspect, as well, that if we could sharpen up our understanding and remove some of the fuzziness that we could improve communications with the lay public across many levels of the semWeb enterprise.

The Useful Distinction of the TBox and ABox

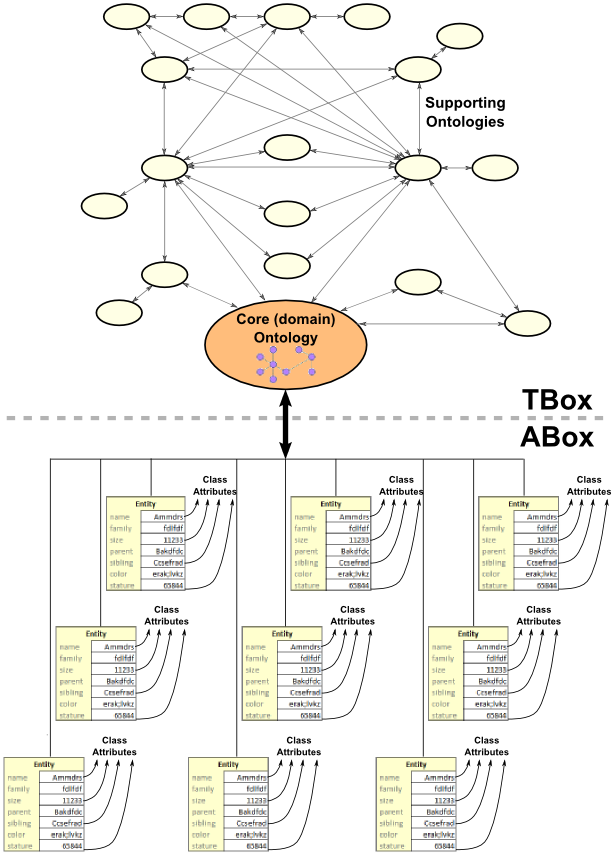

Recently, I have been looking to the semantic Web’s roots in description logics. One of my writings, Thinking ‘Inside the Box’ with Description Logics, looked at the conceptual distinctions between the so-called ‘TBox‘ and ‘ABox‘. That is, a knowledge base is a logical schema of roles and concepts and the relationships between them (the TBox), which is populated by the actual data (instances) asserting memberships and attributes (“facts”) (the ABox).

By analogy, in a conventional relational database system, the database or logical schema would correspond to the TBox; the actual data records or tables would correspond to the ABox. Often, the term ontology is used to cover both ABox and TBox statements (which, I argue, only makes the understanding of the ‘ontology’ concept more difficult).

My recent writing, Back to the Future with Description Logics, discussed at some length the advantages of keeping the TBox and ABox separate. This current article now expands on those thoughts, particularly with respect to the definition and understanding of ontology.

The starting point for this new mindset is to return to the ideas of data records or data tables v. the logical schema that is prevalent in relational databases.

So Many Structs, So Little Time

The last time I took a census, about a year ago, there were more than 100 converters of various record and data structure types to RDF [2]. These converters — also sometimes known as translators or ‘RDFizers’ — generally take some input data records with varying formats or serializations and convert them to a form of RDF serialization (such as RDF/XML or N3), often with some ontology matching or characterizations. That last census listed these converters:

|

|

Note that MIT’s SIMILE RDFizers also recognizes these formats:

|

There is a growing list of third-party RDFizers as well:

|

This wealth of formats shows the robustness of the RDF data model to capture structure and data relationships from virtually any input form. This is what makes RDF so exciting as a canonical target for getting data to interoperate.

Let’s Make this Elementary, Dr. Watson

However — and this is crucial — standard users for decades have preferred simple, text-based and human readable formats for writing and transferring their structured data.

These various forms, sometimes well specified with APIs and sometimes almost ad hoc as in spreadsheet listings, are what we call ‘structs‘. Structs can all be displayed as text and have, at minimum, explicit or inferrable key-value pairs to convey data relationships and attributes, with data types and values often noted by various white space, delimiter or other text conventions.

There is no doubt that the vast majority of extant data is found in such formats, including the results of data or information extraction from unstructured text. Indeed, even HTML and many markup languages with their angle bracket-delimited fields fall into this category.

There have literally been hundreds of various formats proposed over decades for conveying lightweight data structures. Most have been proprietary or limited to specific domains or users. Some, such as fielded text, structured text, simple declarative language (SDL), or more recently YAML or its simpler cousin JSON, have become more widely adopted and supported by formal specifications, tools or APIs. JSON, especially, is a preferred form for Web 2.0 applications.

Some, like microformats or this example BibTeX record below (with some non-standard extensions), rely less on syntax conventions and may use reserved keywords (such as AUTHOR or TITLE as shown) to signal the key type for the key-value pair:

ID_LOCAL arXiv:0711.3808 AUTHOR <a href="#Schramm_O">Oded Schramm</a> BIBTYPE ARTICLE ID arXiv:0711.3808 JOURNAL Electron. Res. Announc. Math. Sci. PAGES 17--23 SUBJECTS geom TITLE Hyperfinite graph limits URL http://www.aimsciences.org/journals/doIpChk.jsp?paperID=3117&mode=full URL http://www.aimsciences.org/journals/displayPapers0.jsp?comments=&pubID=221&journID=14&pubString_num=Volume: 15, 2008 Journal Issue VOLUME 15 YEAR 2008

Some of these simple formats have been more successful than others, though none have achieved market dominance. There also appear to be few universal principles that have emerged as to syntax or format. Nonetheless, any of these various struct forms are easy for casual readers to understand and easy for domain experts to write.

For modeling and interoperability purposes, many of these forms are patently inadequate. That is why many of these simpler forms might be called “naïve”: they achieve their immediate purpose of simple relationships and communication, but require understood or explicit context in order to be meaningfully (semantically) related to other forms or data.

Yet, if we have learned nothing else with the phenomenal success of the Web it is this: simplicity trumps elegance or expressivity.

RDF and the Skinny ABox

The RDF (Resource Description Framework) data model is expressed as simple subject–predicate–object “triple” statements. That sounds fancy, but just substitute verb for predicate and noun for subject and object. In other words: Dick sees Jane; or, the ball is round. It may sound like a kindergartner reader, but it is how data can be easily represented and built up into more complex structures and stories.

RDF triples can be applied equally to all structured, semi-structured and unstructured content. RDF is clearly a most capable data model that — through its ability to be extended with further concepts and relationships (vocabulary) — can create elegant and logical structures to represent comprehensive domains and knowledge bases. Finding such a model has been a quest in my professional life; I believe we finally have a winner to facilitate data interoperability using RDF.

But RDF has not achieved the market acceptance that its suitability as a data representation model might suggest. I think there are three reasons for this:

- First, RDF was first presented and “sold” as an XML serialization. This failing has been well understood for some time. This unfortunate early linkage of RDF caused confusion between data model and the XML syntax. The rather simple and incremental building blocks of triple RDF statements when presented in the nested XML syntax led to lengthy and hard-to-read specifications (for easier reading and use, see either the N3 or Turtle syntaxes)

- Second, triples by definition are 50% more complicated than a key-value pair. While the basic RDF statement might be simple like a Dick-and-Jane reader, as a data specification format it is still more complex than my personal attributes of sex:Male and hair:Red and born:California. Those three “facts” can not be said nearly so quickly in RDF. And, if we also adhere to linked data, each one of these items requires a URI unique identifier to boot! It is important not to ignore the desire for simple and human readable data-specification formats

- Third, as this entry began and as we will conclude, RDF and its fuzzy relationship to ontology has led to over-specification of what needs to be included in the data record. What could simply be a record specification of an object and its attributes presented as simple key-value pairs has become burdened with “ontology” and “conceptual” relationships.

Canonical forms embody all of the specification that the canon guiding them requires. What we may have failed to see in embracing RDF, however, is that getting useful data into the system need not carry all of this burden.

Lightening Up and Shifting Work to the TBox

So, what does all of this have to do with my starting diatribe about the term ontology?

Whether a single database or the federation across all information known to human kind, we have data records (structs of instances) and a logical schema (ontology of concepts and relationships) by which we try to relate this information. This is a natural and meaningful split: structure and relationships v. the instances that populate that structure.

Stated this way, particularly for anyone with a relational database background, the split between schema and data is clear and obvious. Yet, the RDF, semantic Web and linked data communities have done an abysmal job of recognizing this fundamental separation of concerns.

We create “ontologies” that mix instances and schema. We insist on simple data record conversions that are burdened with relationship specifications as well. We tout a “linked data” infrastructure that is based solely on the same identity of instances without respect or attention to structure or conceptual relationships. We dismiss communities that work to express their data with useful local structures. We insist on standards and practices up and down the data staging and preparation chain that turns off the general market and makes us seem arrogant and dismissive. Frankly, in so many ways, we just don’t get it [3].

What has struck me personally over the past few months as these realizations have unfolded has been how much our own mindsets and language may be trapping us.

- Does existing structured data need to be expressed as RDF in order to be useful and integrated?

- Exposing linked and instance data is great, but to what end; what are the conceptual or structural schema?

- Why is our standards process so inward looking and parochial (often petty)? What purpose or who does this serve?

At least for this diatribe, my essential conclusion is that we need to shift the burden of the schema and conceptual relations and (yes) world views to the TBox. We need to skinny down the ABox and make it a warm and welcoming environment by which any structured data (including the most naïve) can join.

So, ultimately, the bottom line is this: the burden of the semantic Web rests on us, not the providers of structured data.

It is time to streamline the ABox to smooth data contributions, assume as publishers the responsibility for the TBox, and keep those concerns separate. As for instance-related stuff, I now intend to refer to them as structs governed by a controlled vocabulary (at most). I intend to reserve ontology as a means to describe a given world view, a TBox, the schema and its relations of the domain at hand. And, frankly, this definition of ontology brings it back in balance with its roots in ontos and the nature of the world.

It’s a good time to lighten up!

This Friday brown bag leftover was first placed into the AI3 refrigerator on January 22, 2009, and is one of the more popular historical posts of this blog. This reprise is unchanged since its original posting, though we have continued to make progress on constructs such as irON to capture this idea. Microdata in HTML5 is also an important contribution, to which we will devote some attention in the near future.

This Friday brown bag leftover was first placed into the AI3 refrigerator on January 22, 2009, and is one of the more popular historical posts of this blog. This reprise is unchanged since its original posting, though we have continued to make progress on constructs such as irON to capture this idea. Microdata in HTML5 is also an important contribution, to which we will devote some attention in the near future.

I endorse the following statement:

“… reserve ontology as a means to describe a given world view … this definition of ontology brings it back in balance with its roots in ontos and the nature of the world”

I don’t fully endorse a strict separation of A-Box and T-Box, since it is quite natural that a particular can be a universal for another particular and so on (see also [1]).

[1] Ferris, Bob; “On Resources, Information Resources and Documents”; smiy.org; 2010