Open Source, Open World, Web, and Semantics to Transform the Enterprise

Ten years ago the message was the end of obscene rents from proprietary enterprise software licenses. Five years ago the message was the arrival and fast maturing of open source. Today, the message is the open world and semantics.

These forces are conspiring to change much within enterprise IT. And, this change will undoubtedly be for the good — for the enterprise. But these forces are not necessarily good news within conventional IT departments and definitely not for traditional vendors unwilling to transform their business models.

I have been beating the tom-tom on this topic for a few months, specifically in regards to the semantic enterprise. But I have by no means been alone nor unique. The last two weeks have seen an interesting confluence of reports and commentaries by others that richen the story of the changing information technology landscape. I’ll be drawing on the observations of Thomas Wailgum (CIO magazine) [1], John Blossom [2] and Andy Mulholland, CTO of Capgemini [3].

The New Normal

“After nearly five decades of gate-keeping prominence, corporate IT is in trouble and at a crossroads like never before in its mercurial and storied history as a corporate function. You may be too big to fail, but you’re not too big to succeed. What will you do?”

Wailgum describes the “New Normal” and how it might kill IT [1]. He picks up on the viewpoint that ties the recent meltdowns in the financial sector as a seismic force for changes in information technology. While he acknowledges many past challenges to IT from PCs and servers and Y2K and software becoming a commodity, he puts the global recession’s impact on business — the “New Normal”– into an entirely different category.

His basic thesis is that these financial shocks are forcing companies to scrutinize IT as never before, in particular “unfavorable licensing agreements and much-too-much shelfware; ill-conceived purchasing and integration strategies; and questionable software married to entrenched business processes.”

Yet, he also argues that IT and its systems are too ingrained into the core business processes of the enterprise to be allowed to fail. IT systems are now thoroughly intertwined with:

- ERP systems – the financial, administrative and procurement backbone of every organization

- Business development and BI

- Operations and forecasting

- Customer service and call centers

- Networking and security

- Sales and marketing via CRM and lead generation

- Supply chain applications in manufacturing and shipping.

But top management is disappointed and disaffected. IT systems gobble up too many limited resources. They are inflexible. They are old and require still more limited resources to modernize. They are complex. They create and impose delays. And all of these negatives lead to huge losses in opportunity costs. Wailgum notes Gartner, for instance, as saying that by 2012 perhaps 20 percent of businesses will own no IT assets at all in their desire to outsource this headache.

“Enterprise systems are doing it wrong. And not just a little bit, either. Orders of magnitude wrong. Billions and billions of dollars worth of wrong. Hang-our-heads-in-shame wrong. It’s time to stop the madness.”

I think this devastating diagnosis is largely correct, though perhaps incomplete in that no mention is made of the flipside: what IT has failed to deliver. I think this flipside is equally damning.

Despite decades of trying, IT still has not broken down the data stovepipes in the enterprise. Rather, they have proliferated like rabbits. And, IT has failed to unlock the data in the 80% of enterprise information contained within documents (unstructured data).

Unfortunately, after largely zeroing in and mostly diagnosing the situation, Wailgum’s remedy comes off sounding like a tired 12-step program. He argues for new mindsets, better communications, getting in touch with customers, being willing to take risks, and being nimble. Well, duh.

So, over the decades of IT failures there has been accompanying decades of criticism, hand-wringing, and hackneyed solutions. Without some more insightful thinking, this analysis can make our understanding of the New Normal look pretty old.

Not Necessarily Good News for Vendors

John Blossom [2] picks up on these arguments and looks at the issues from the vendor’s perspective. Blossom characterizes Wailgum’s piece as “outlining the enormous value gap that’s been arising in enterprise information technologies.” And, while clearly new approaches are needed and farming them out may become more prevalent, Blossom cautions this is not necessarily good news for vendors.

“. . . the trend towards agnosticism in finding solutions to information problems is only going to get stronger. Whatever platform, tool or information service can solve the job today will get used, as long as it’s affordable and helps major organizations adapt to their needs.”

As Blossom puts it, “what seems to be happening is that many of the business processes through which these enterprises survived and thrived over the past several decades are shooting blanks. . . . many of the fundamental concepts of IT that have been promoted for the past few decades no longer give businesses operational advantages but they have to keep spending on them anyway.”

As he has been arguing for quite some time, one fundamental change agent has been the Web itself. “The Web has accelerated the flow of information and services that can lead to effective decision-making far more rapidly than enterprise IT managers have been able to accommodate.”

Web search engines and social media tools can begin to replace some of the dedicated expenditures and systems within the enterprise. Moreover, the extent, growth and value of external data and content is readily apparent. Without outreach and accommodation of external data — even if it can solve its own internal data federation challenges — the individual enterprise is at risk of itself becoming a stovepipe.

Prior focuses on strategy and capturing workflows are perhaps being supplanted by the need for operational flexibility and on-the-fly aggregation and rapid service development tools. In an increasingly interconnected and rapidly changing world with massive information growth, being able to control workflows and to depend on central IT platforms may become last decade’s “Old Normal.” Floating on top of these massive forces and riding with their tides is a better survival tactic than digging fixed emplacements in the face of the tsunami.

These factors of Web, open source, agnosticism as to platform or software applications, and the need to mash up innovations from anywhere are not the traditional vendor game. Just as businesses and their IT departments must get leaner, so must the expectation of vendors to extort exorbitant rents from their clients. “Fasten your seatbelts, it’s going to be a bumpy night!” [4]

So, Blossom agrees with the Wailgum diagnosis, but also helps us begin to understand parts of the cure. Blossom argues the importance of:

- Web approaches and architectures

- Incorporation of external data

- Leverage of Web applications, and

- Use of open standards and APIs to avoid vendor lock-in.

Much, if not all of this, can be provided by open source. But open source is not a sine qua non: commercial products that embrace these approaches can also be compatible components across the stack.

A Semantic Lever on An Open World Fulcrum

But — even with these components — a full cure still lacks a couple of crucial factors.

These remaining gaps are emphasized in Andy Mulholland’s recent blog post [3]. His post was occasioned by the press announcement that Structured Dynamics (my firm) had donated its Semantic Enterprise Adoption and Solutions, or SEAS, methodology to MIKE2.0 [5]. Mulholland was suggesting his audience needed to know about this Method for an Integrated Knowledge Environment because some of the major audit partnerships have decided to get behind MIKE2.0 with its explicit and open source purpose of managing knowledge environments and their data and provenance.

“In ‘closed’ — or some might say normal — IT environments where all data sources can be carefully controlled, all statements are taken to be false unless explicitly known to be true. However most ‘new’ data is from the ‘open’ environment of the web and in semantic data. If this is not specifically flagged as true it is categorised as ‘unknown’ rather then false. This single characteristic to me is in many ways the most crucial issue to understand as we go forward into using mixed data sets to support complex ‘business intelligence’ or ‘decision support’ around externally driven events, and situations.”

As Mulholland notes, “. . . it’s not just more data, it’s the forms of data, and what the data is used for, all of which add to the complications. . . . Sadly the proliferation of data has mostly been in unstructured data in formats suitable for direct human use.”

So, one remaining factor is thus how to extract meaning from unstructured (text) content. It is here that semantics and various natural language processing (NLP) components come in. Implied in the incorporation of data extracted from unstructured sources is a data model expressly designed for such integration.

Yet, without a fulcrum, the semantic lever can still not move the world. Mulholland insightfully nails this fundamental missing piece — the “most crucial issue” — as the use of the open world assumption.

From an enterprise perspective and in relation to the points of this article, an open world assumption is not merely a different way to look at the world. More fundamentally, it is a different way to do business and a very different way to do IT.

I have summarized these points before, but they deserve reiteration. Open world frameworks provide some incredibly important benefits for knowledge management applications in the enterprise:

- Domains can be analyzed and inspected incrementally

- Schema can be incomplete and developed and refined incrementally

- The data and the structures within these open world frameworks can be used and expressed in a piecemeal or incomplete manner

- We can readily combine data with partial characterizations with other data having complete characterizations

- Systems built with open world frameworks are flexible and robust; as new information or structure is gained, it can be incorporated without negating the information already resident, and

- Open world systems can readily bridge or embrace closed world subsystems.

Archimedes is attributed to the apocryphal quote, “Give me a lever long enough and a fulcrum on which to place it, and I shall move the world.” [6] I have also had lawyer friends tell me that the essence of many court cases is found in a single pivotal assertion or statement in the arguments. I think it fair to say that the open world approach plays such a central role in unlocking the adaptive way for IT to move forward.

Bringing the Factors Together via Open SEAS

As Mulholland notes, we have donated our Open SEAS methodology [7] to MIKE2.0 in the hopes of seeing greater adoption and collaboration. This is useful, and all are welcome to review, comment and contribute to the methodology, indeed as is the case for all aspects of MIKE2.0.

But the essential point of this article is that Open SEAS also embraces most — if not all — of the factors necessary to address the New Normal IT function.

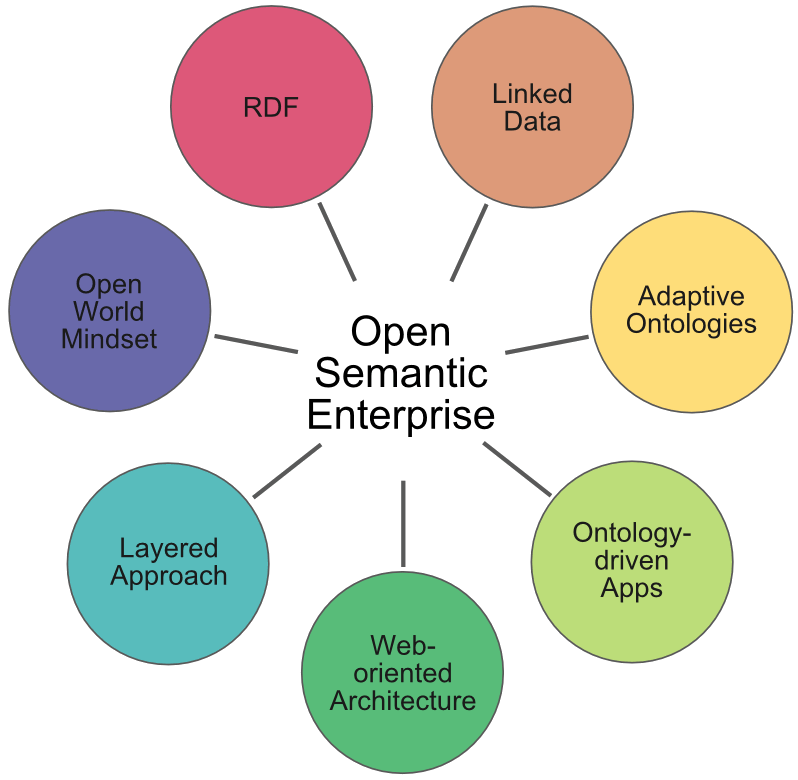

Open SEAS is explicitly designed to facilitate becoming an open semantic enterprise. Namely, this means an organization that uses the languages and standards of the semantic Web, including RDF, RDFS, OWL, SPARQL and others to integrate existing information assets, using the best practices of linked data and the open world assumption, and targeting knowledge management applications. It does so based on Web-oriented architectures and approaches and uses ontologies as an “integration layer” across existing assets.

The foundational approaches to the open semantic enterprise do not necessarily mean open data nor open source (though they are suitable for these purposes with many open source tools available). The techniques can equivalently be applied to internal, closed, proprietary data and structures. The techniques can themselves be used as a basis for bringing external information into the enterprise. ‘Open’ is in reference to the critical use of the open world assumption.

These practices do not require replacing current systems and assets; they can be applied equally to public or proprietary information; and they can be tested and deployed incrementally at low risk and cost. The very foundations of the practice encourage a learn-as-you-go approach and active and agile adaptation. While embracing the open semantic enterprise can lead to quite disruptive benefits and changes, it can be accomplished as such with minimal disruption in itself. This is its most compelling aspect.

We believe this offers IT an exciting, incremental and low-risk path for moving forward. All existing assets can be left in place and — in essence — modernized in place. No massive shifts and no massive commitments are required. As benefits and budgets allow, the extent of the semantic interoperability layer may be extended as needed and as affordable.

The open semantic enterprise is not magic nor some panacea. Simply consider it as bringing rationality to what has become a broken IT system. Embracing the open semantic enterprise can help the New Normal be a good and more adaptive normal.

Home run !

Great post! In 2010, consumer collaboration tools are finding their way into the enterprise, but they are not really equipped for business. The result is the need for new standards such as you talk about. Also the emergence of open platform applications (a little like apps on the iPhone!) which is definitely disruptive for traditional vendors.