We’ll Try to be as ‘Pythonic’ as Possible in the Design

In past efforts, we have produced self-contained semantic technology platforms — for one, the Open Semantic Framework, since retired — based on similar objectives to what we have set for this CWPK series. However, with Cooking with Python and KBpedia, our audience is the newbie committed to learn more, not the enterprise. It may be that the approaches presented in this series may be adapted for enterprise use, but in order to maximize the training value of this series we prefer to emphasize off-the-shelf ‘glue-together’ components utilizing a fairly easy to learn and common language, Python. Our objective here is not commercial performance and security, but learnability and understandability.

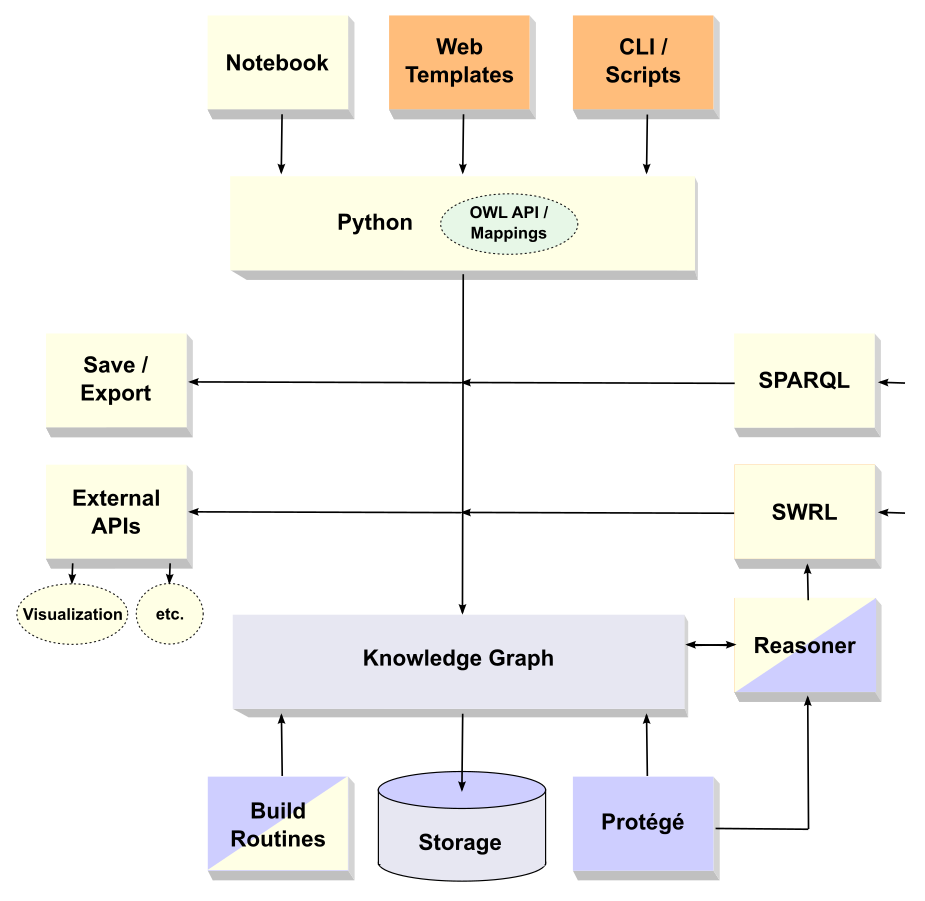

Our design places the knowledge graph at the center, as shown below, surrounded by Python-based applications shown in yellow. The knowledge graph in our instance, KBpedia, is written in the W3C standard Web ontology language of OWL 2. However, what we are outlining here, including the possible extensions of KBpedia into your own domain of interest, can apply to any knowledge graph using World Wide Web Consortium (W3C) open standards. The language, as we implement it, embraces the other W3C standards of the Resource Description Framework (RDF) and its schema extension (RDFS). We also use an implementation of RDFS called SKOS (Simplified Knowledge Organization System), which is useful for providing a language of hierarchies and classification and labels familiar to librarians and information scientists.[1] Note all of these standards are completely independent of Python, or any programming language for that matter. These standards follow description logics and enable logical manipulation and analysis of their knowledge representations (KR).

Historically, many programming languages have been used to manage, store, and manipulate these W3C standard KR languages. For at least the past 15 years, Java has been the dominant programming language for semantic technology applications, most often accounting for more than half of all tools.[2] From an enterprise standpoint, Java-based applications may still be the most defensible choice. But we want our architecture to embrace a single language, Python, that has great connections in some areas, perhaps weak ones in others. Nonetheless, like any language choice, there are trade-offs. Working through those trade-offs for Python is an explicit topic in this CWPK series.

The architecture diagram below reflects these considerations. At the top we have inputs into the Python-based system, based on electronic notebooks, Web templates where user interactions send directives to the system, or direct command line interfaces (CLI). Because they are interactive and can display invoked apps, we will be using the electronic notebook interface for most installments in this series. We include some CLI stuff for quick responses. And, we include Web page examples of how one might drive these Python-based applications based on choices by users in their Web site interactions. This latter input style is very important, since interaction with knowledge graphs should be a distributed activity across normal workflows. Stopping to invoke a separate application space whenever new knowledge is encountered or questioned is unnatural and leads to little or no adoption. If we are to take advantage of these knowledge technologies, we must integrate them into our current work activities.

These possible sources of input would be best served by having a Python interface or API that maps the basic class, instance, property, and value perspectives of the W3C standards into native Python constructs. This will allow us to abstract knowledge graph specifications into natural Python code. We show this unspecified (at this time) ‘OWL API / Mappings’ component in green in the diagram. This pivotal component will receive much attention throughout the ensuing series.

This Python input is geared to access and manage the knowledge graph, shown at the bottom of the diagram. The knowledge graph needs its own storage to be persistent. (We do not spend further time on this component, other than to say that systems should be designed to interface with external storage, not incorporate specific ones. Storage is a commodity component.) Ontologies, or knowledge graphs, already have an excellent open-source integrated design environment (IDE) in the Protégé application, developed by Stanford University.

We can see these major components in the following diagram. The Python components are shown in yellow; the knowledge graph (KBpedia) in gray; and external tools for the knowledge graph in blue. Two split boxes show that both existing, external apps and Python ones are possible for those functions:

The diagram shows that inputs or requests of the knowledge graph may come from specific functional components such as querying (SPARQL), rule-setting (SWRL), or programmatic ones coming from user interfaces or external requests (yellow and orange). Also, in a loosely-coupled manner, we want outputs from our system to be flexible enough to tailor to various file formats or external APIs. This interface point is where using the system to, say, power machine learning or natural language applications, among all external systems, resides. Knowing how to stage and format outputs is a key task of the design.

Protégé plays an integral role in this architecture. It is firstly the common denominator for talking about the system, since this tool is ubiquitous in the semantic technology space. Secondly, most users have only manipulated knowledge graphs through this interface. Our Python-based system must duplicate this functionality, plus show how we can greatly bridge past it. Moreover, there are many ontology or knowledge graph management tasks where Protégé is the go-to choice. Searching, navigating, and visualizing are some of the key strengths of Protégé. The objective is not to replace Protégé, but to complement it. Protégé has an organizational view of knowledge graphs; what we want is a knowledge view of knowledge graphs. We thus use Protégé as a common touchstone as we work through our installments.

Protégé can host reasoners, as can our Python code, which is why that component is shown in dual blue-yellow colors. Another dual component is the build routines. This part of the architecture is deceptively critical, since we need to both: 1) logically test the knowledge graph for coherence and consistency as we add to or build it; and 2) enable round-tripping between build and W3C formats.

Among perhaps others, I see two payoffs to the pursuit of an architecture such as this. One, we can gain a dual programmatic and interactive environment for managing and keeping a knowledge graph current. And, two, we provision an engine for feeding external APIs in areas such as machine learning, natural language understanding, and interoperability.